Can leaders stop firefights and see the pattern behind repeated problems? This Ultimate Guide promises a clear, practical path. It shows how a repeatable approach links strategy, operations, and people’s day-to-day work.

Modern organizations face fast change from global forces, tech shifts, and climate risk. Linear fixes often miss links and create side effects. This guide offers tools to map connections, feedback loops, and leverage points for durable solutions.

The guide is written by practitioners who diagnose chronic issues, run mapping sessions, and turn insights into real change. Readers will get straightforward questions to ask, common diagram mistakes to avoid, and concrete scenarios that make concepts tangible.

What to expect: plain language, step-by-step methods, example tools, and candid notes on when this approach fits — and when it does not. The goal is a practical big-picture + on-the-ground way to improve decisions over time.

Why organizational complexity keeps rising in today’s business environment

Organizations no longer act in isolation; they respond to signals from a connected world. Global supply chains, platform ecosystems, remote work, AI tools, shifting regulation, and climate volatility all increase interdependence. These factors make routine decisions ripple farther and faster.

Leaders see this as repeated firefighting: a change in pricing, staffing, product scope, or vendor choice can shift workloads, costs, and customer expectations weeks later. Small fixes often create second‑order effects that feel mysterious at first.

- Connectedness examples: suppliers, partners, customers, and networks react to the same signals.

- Ripple effects: a sales incentive that speeds orders can drop service quality elsewhere.

- Complicated vs. complex: complicated problems have many parts but predictability; complex situations adapt and shift as people respond.

“When parts adapt, top-down plans lose traction unless interdependencies are addressed.”

In practice, organizations behave like complex adaptive systems: people self-organize around incentives and information flows. Leaders cannot control every variable, but they can shape system conditions—policies, feedback, capacity, and decision rights—to reduce recurring problems and help the organization learn over time.

What systems thinking is in business and when it’s the right approach

Leaders who want durable fixes start by tracing how roles, tools, and incentives produce outcomes over time.

A practical definition focused on connections

Systems thinking is a disciplined way to understand how people, processes, tools, incentives, and information flows interact to produce outcomes over time.

How circular causality differs from simple cause-and-effect

Circular causality means actions change conditions and those changed conditions shape future actions.

For example, overtime can lower quality; lower quality creates more rework and more overtime. That loop keeps the problem alive.

Signals that the problem is a system, not a single team

- The same failure appears across different teams.

- Performance hinges on handoffs and shared processes.

- Incentives or metrics conflict across components.

Illustrative example: the broken gear diagnostic

A systems thinker inspects design, load and torque, environment, and maintenance routines rather than just swapping the gear.

That lesson maps to work: replacing a person or tool can help, but it fails if operating conditions remain unchanged.

| Focus | Symptom fix | System diagnosis |

|---|---|---|

| Root cause | Replace failing part | Assess design, load, environment, upkeep |

| Scope | Single team | Cross-functional connections and handoffs |

| Outcome | Short-term relief | Durable reduction in recurring problems |

Questions to ask: What connections drive this outcome? What loop keeps it going? What changed in the environment?

Core principles that make systems thinking work

Core principles turn a scatter of events into an actionable picture leaders can use. These ideas guide where to look first and how to test small changes without mapping everything.

Interconnections across people, processes, tools, and information

Outcomes arise from handoffs and dependencies across people, process steps, tooling limits, policies, and information flows. Optimization that ignores handoffs often shifts the problem downstream.

Feedback loops that reinforce or balance outcomes

Identify reinforcing loops that amplify change and balancing loops that stabilize it. Reinforcement can speed capability gains; a balancing loop like service recovery can limit customer loss if capacity exists.

Delays, bottlenecks, and ripple effects

Hiring lead time, procurement cycles, and approval queues create delays that make leaders overcorrect. Small intake rule changes can ripple and distort downstream metrics.

Emergence and synthesis

Well-designed cross-functional work can make the whole outperform the parts. Shared platforms, for example, cut rework and lift customer experience at the same time.

Boundaries and scope

Choose a scope big enough to include key drivers but small enough to test. Define what is inside versus outside the model and update scope as learning improves.

Checklist: Identify loops, delays, constraints, and where whole outcomes differ from sum-of-parts metrics before launching solutions.

Systems thinking mindsets that improve how leaders frame questions

When leaders adopt practical inquiry habits, teams surface constraints faster and with less conflict.

Use the camera metaphor: Zoom close to inspect frontline work, then pan out to see context. This habit prevents over-focusing on one visible issue or over-abstracting away from people who do the work.

Shift perspective across stakeholders

Run a quick stakeholder routine in meetings. Map how engineering, sales, operations, finance, and customers define success.

Compare those views to reveal hidden constraints and misaligned incentives. This exposes how differing priorities drive recurring issues.

Be aware of one’s own lens

Teach lens awareness with an example: finance sees cost variance; support sees repeat contacts. Both are true. Both are partial.

Label those biases in facilitation to avoid blame and to build shared understanding.

Reframe to avoid solving the wrong problem

Use prompts drawn from Russell Ackoff: “What are we optimizing for—and what are we sacrificing?” “Who benefits and who absorbs the cost?” “What would make this problem disappear without working harder?”

| Mindset | Prompt or Question | Meeting behavior |

|---|---|---|

| Zoom in/out | What is happening on the front line? What else affects it? | Alternate field reports and strategy pause |

| Shift perspective | How do others define success? | Role-based breakout and compare notes |

| Lens awareness | What assumptions shape our view? | State assumptions and test with data |

Practice note: Pair these mindsets with human-centered inquiry from IDEO U to surface motivations, unmet needs, and social norms that shape real change.

“Teams more often fail by solving the wrong problem than by finding the wrong solution.” — Russell Ackoff

Systems mapping tools to see the whole picture

Mapping turns scattered observations into a testable model for action. Maps help teams see connections, reveal missing information, and focus on where change matters.

Rich pictures for rapid alignment

Rich pictures let people sketch actors, pain points, and hidden assumptions. They are fast and low-stakes.

Good use: 15–30 minutes at the start of a session to set boundaries and surface conflicting goals.

Causal loop diagrams to reveal dynamics

Causal loop diagrams (CLDs) show reinforcing and balancing loops and feedback loops. They make interdependencies visible so teams can predict where an intervention might backfire.

Practical mapping flow

- Define purpose and scope.

- List key variables and actors.

- Connect causality arrows and label delays.

- Identify and label loops, then mark leverage points.

Common diagramming mistakes

- Too many variables—maps become busy and unusable.

- Unclear definitions—different people use the same word differently.

- Mixing symptoms with structural drivers—confuses action planning.

Turn maps into a shared language

Agree on variable definitions, version maps, and use them in planning and retrospectives. Treat every map as a learning tool, not the final deliverable.

“Maps help teams ask better questions and design safer experiments.”

Quantitative system dynamics models for evidence-based decisions

Quantitative models translate causal maps and operational signals into time-based scenarios leaders can use for safer choices.

When qualitative maps are enough and when analytics should be added

Qualitative mapping works for early discovery, alignment, and low-risk hypotheses. It guides fast learning and surfaces assumptions.

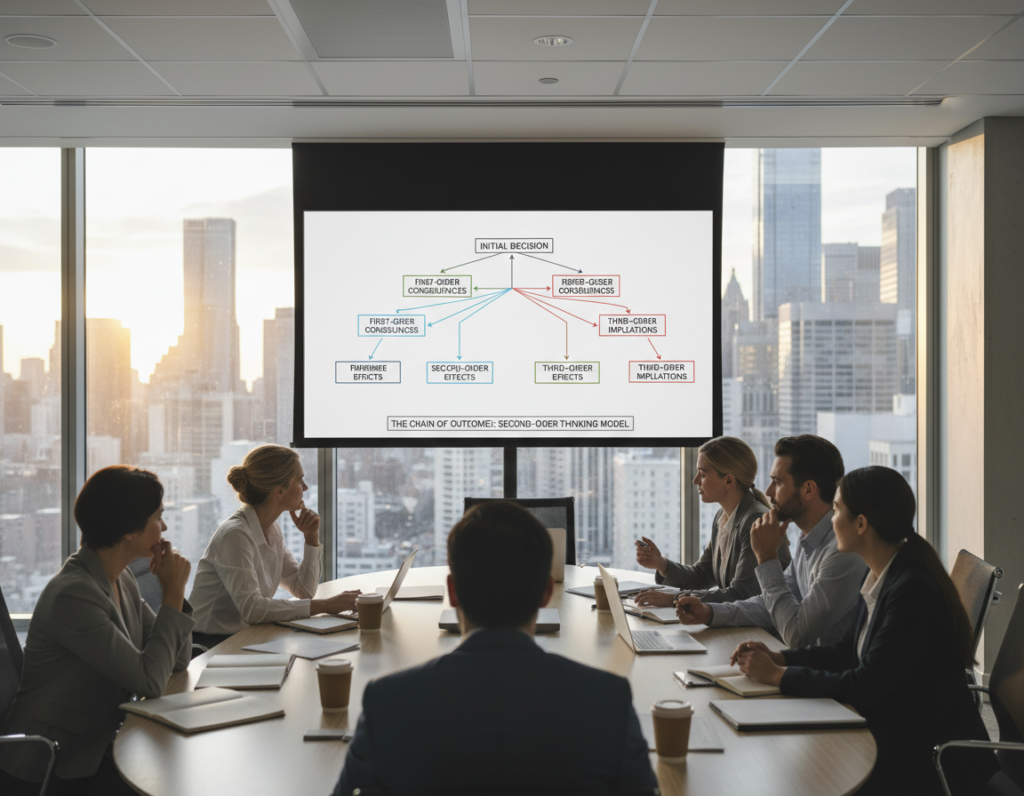

Add quantitative modeling when stakes are high: capacity investments, long delays, service-level commitments, or policy changes that can cause costly second-order effects.

Using operational data to test “what if” scenarios

Turn a causal diagram into a stock-and-flow model and calibrate with arrival rates, cycle times, backlog, and attrition.

Run scenarios to compare outcomes over time before rollout. Leaders get visible trade-offs instead of opinion-based debate.

Forecasting second-order effects and trust practices

Forecasts show how staffing shifts change queue length, how capacity limits alter service levels, and how cost moves with rework or churn.

Make assumptions explicit, run sensitivity checks, and compare model behavior to historical patterns.

| Use case | Key data | Primary output | Decision value |

|---|---|---|---|

| Capacity planning | Arrival rate, service time | Queue length over time | Staffing and shift design |

| Service commitment changes | Cycle times, SLAs, backlog | Service level trajectories | Risk and compensation trade-offs |

| Cost-impact assessment | Rework rates, churn, unit cost | Projected cost growth | Investment vs mitigation choices |

| Policy with long delay | Lead time, attrition | Delayed response curves | Phased rollout and monitoring |

Practical note: Models support better decisions by quantifying second-order effects. They are decision aids, not guarantees; pair them with frontline tests and staged pilots.

systems thinking business applications for innovation, sustainability, and operations

Practical applications show how a connected view turns isolated fixes into repeatable, measurable gains across product, operations, and sustainability.

Designing integrated processes aligns incentives, shared platforms, and handoffs so improvements compound across departments instead of shifting work downstream.

Reduce chronic rework by changing intake rules, clarifying a common definition of “done,” and trimming overloaded review steps. This shifts blame into structural fixes and lowers repeat tasks.

Build circularity and efficiency by looping materials, reusing byproducts, and treating waste as input. Such process design boosts resilience and reduces cost over time.

Two brief examples

Traffic congestion: Reframe from “reduce traffic” to “help people move.” Copenhagen shows how cycling lanes, better transit, and smarter signal timing change behavior and cut delay.

Customer-service software: A new tool can expose integration gaps, raise tickets, and require training capacity. Plan training loops, monitor first-contact resolution, and add capacity buffers to avoid service dips.

Measure what matters: track end-to-end cycle time, rework rates, first-contact resolution, and sustainability metrics—not just silo KPIs.

Implementing systems thinking in organizations without losing momentum

Start small, show value, and expand deliberately. Focus first on one recurring issue and invite a mix of roles to map it in a single afternoon.

How to run collaborative modeling sessions

- Clarify purpose and scope in two sentences.

- Invite cross-functional participants who touch the work.

- Capture multiple mental models, then converge on a shared map.

- Finish with two to three testable interventions and owners.

Build trust and buy-in. Participation creates ownership. Visible maps reduce misinterpretation. Documenting assumptions makes disagreements discussable, not political.

Use champions to land quick wins like lower rework or faster cycle time. Tell short stories that show impact. When leaders resist, ask curious questions and add their constraints as variables in the model.

Balance silos and integration. Set cross-functional cadences and shared metrics while protecting needed specialization. Train and coach internal practitioners to run sessions and keep models alive.

Teams should act inside their sphere of influence: change policies, handoffs, or dashboards they can actually control.

Embed the practice into planning, risk reviews, product gates, and evaluations so learning compounds over time.

Conclusion

Adopting a holistic lens helps teams turn recurring fixes into lasting improvements they can measure.

This approach replaces isolated fixes with an approach that improves decisions by mapping interconnections, feedback loops, delays, and emergence. Leaders gain practical tools: rich pictures and causal loop diagrams to align teams, and quantitative models to test key scenarios when stakes rise.

Next steps: pick one chronic operational problem, run a cross-functional mapping session, select one leverage point, and run a small experiment with clear measures and timelines.

To stay trustworthy, make assumptions explicit, watch for second‑order effects, and treat maps and models as living learning programs. Remember the broken gear: lasting solutions come from changing the conditions that keep it breaking, not repeatedly replacing the part.